Siva Prasad-Data Engineer

Check rate

Experience

Data Engineer

Scania AB

- Built and maintained ETL/ELT data pipelines processing hourly batch data from IoT devices, handling millions of events daily with 99.9% reliability; implemented data quality checks and automated monitoring frameworks ensuring end-to-end data accuracy.

- Architected AWS and Databricks data infrastructure implementing Medallion Architecture (Bronze/Silver/Gold layers); designed scalable data lake on Amazon S3 supporting data scientists, analysts, and downstream BI teams.

- Developed PySpark applications in AWS Databricks for data extraction, transformation, and aggregation from JSON, Parquet, and Avro formats; optimized Spark jobs reducing processing time by 40%.

- Implemented real-time data ingestion pipelines using AWS MSK (Apache Kafka) with Python and Go consumer applications; built backpressure handling and SLA monitoring ensuring reliability at large data volumes.

- Managed production EKS (Kubernetes) clusters running data processing microservices; implemented GitLab CI/CD pipelines with automated testing and deployment, reducing deployment lead time by 40%.

- Designed and maintained Infrastructure as Code using Terraform for AWS data platform; automated provisioning across dev, staging, and production environments with state management and module reusability.

- Built comprehensive monitoring and observability using Datadog, Prometheus, and Grafana; created custom dashboards for pipeline health, data quality KPIs, and SLA tracking.

- Collaborated with Data Scientists, QA Engineers, and Product Managers in agile 2-week sprints; contributed to architecture reviews, sprint planning, and technical design sessions operating autonomously with full end-to-end ownership.

Data Engineer

CEVT (China Euro Vehicle Technology)

- Architected and implemented end-to-end data pipelines on Azure using Azure Data Factory, Azure Databricks, Azure Synapse Analytics, and Apache Kafka; designed batch data workflows processing large datasets with automatic scaling, error handling, and retry logic.

- Developed Python-based ETL/ELT jobs using Azure Databricks notebooks and Delta Live Tables for data ingestion, transformation, and loading; integrated with Azure Synapse Analytics data warehouse for analytics and reporting supporting BI teams.

- Built event-driven streaming pipelines using Azure Functions and Apache Kafka; implemented real-time data aggregation and streaming analytics for IoT sensor data using Kafka Streams and Databricks Structured Streaming.

- Managed AKS (Azure Kubernetes Service) clusters for data processing workloads; implemented Helm charts for deployment standardization, auto-scaling policies, and resource optimization.

- Designed Terraform Infrastructure as Code for Azure Databricks data platform; automated environment provisioning (dev, staging, production) with state management and reusable modules.

- Implemented GitLab CI/CD pipelines with automated testing and deployment.

- Built monitoring and observability stack using Prometheus and Grafana; created dashboards for data pipeline metrics, data quality monitoring, and infrastructure health tracking.

Data Engineer

Swedbank

- Designed and maintained scalable data warehouse and Lakehouse architectures on Azure Synapse Analytics and MS Fabric supporting enterprise-scale financial analytics and reporting.

- Implemented robust ETL/ELT data pipelines using Python, PySpark, SQL, and PowerShell for data ingestion, transformation, and loading; enforced data quality, consistency, and security across all stages of the data lifecycle.

- Designed and implemented modular ELT pipelines using DBT, transforming raw financial data into curated analytical datasets with reusable DBT models, tests, and documentation.

- Automated data orchestration using Azure Data Factory integrated with Snowflake tasks and DBT workflows; ensured reliable and efficient data processing with full auditability and lineage.

- Ensured data governance, data security, and compliance across all data products aligned with financial regulatory requirements.

DevOps

Credit Suisse

- Built data pipelines using Cloud Composer (Apache Airflow), BigQuery data warehouse solutions, Terraform IaC, and GKE-managed containerized data workloads.

- Implemented Prometheus and Grafana monitoring for data pipeline stability.

Infrastructure Automation Engineer

IKEA AB

- Designed and managed production and pre-production environments for a high-traffic e-commerce platform.

- Automated application deployments and infrastructure provisioning.

- Implemented monitoring and alerting for high-availability systems.

Middleware Administrator

ICA, Telstra, Amdocs

- Administered SOA Suite and WebLogic/Oracle application servers.

- Maintained production environments and resolved incidents.

- Optimized performance and administered databases.

Java Developer

Thomson Reuters, Puma

- Developed full-stack Java applications using JSP, HTML, and JavaScript.

- Designed databases and developed SQL for enterprise web applications.

- Managed data for enterprise web applications.

Industry Experience

See where this freelancer has spent most of their professional time.

Experienced in Retail, Information Technology, Telecommunication, Media and Entertainment, Sport, and Automotive.

Business Area Experience

See which departments and functions this freelancer has contributed to most.

Experienced in Information Technology and Business Intelligence.

Summary

Senior Data Engineer and IT Consultant with 19+ years of progressive IT experience and 6+ years of specialized expertise in data engineering, cloud platforms, and modern data stack technologies. Proven track record designing, building, and maintaining scalable ETL/ELT data pipelines that process millions of IoT events and large-scale batch data across AWS and Azure cloud environments.

Dual-cloud expert in AWS (Glue, Athena, S3, MSK) and Azure (Databricks, Data Factory, Synapse Analytics, Delta Lake), with deep hands-on proficiency in Apache Spark, Apache Kafka, Python, SQL, and DBT. Strong foundation in data warehouse design, dimensional modeling, data governance, and performance optimization. Consistently delivers production-grade infrastructure for batch processing, real-time streaming, and large-scale data pipelines in agile, sprint-based environments — owning solutions end-to-end from architecture through deployment.

Skills

- Cloud Platforms: Aws (Msk, S3, Glue, Athena, Rds, Lambda, Eks), Azure (Databricks, Data Factory, Synapse Analytics, Aks, Adls Gen2)

- Data Engineering: Etl/Elt Pipelines, Apache Kafka, Apache Spark, Pyspark, Delta Lake, Delta Live Tables, Dbt, Apache Airflow, Databricks Structured Streaming

- Languages & Tools: Python, Sql, Pyspark, Go, Powershell, Terraform, Helm, Kubernetes, Gitlab Ci/Cd

- Data & Analytics: Data Modeling, Dimensional Modeling, Data Warehousing, Data Quality, Data Governance, Medallion Architecture (Bronze/Silver/Gold)

- Monitoring & Observability: Datadog, Prometheus, Grafana

- Methodologies: Agile, Scrum, Kanban, Waterfall.

Languages

Education

University of Madras

Master of Computer Applications · Computer Applications · Chennai, India

Sri Venkateswara University

Bachelor of Computer Science · Computer Science · Tirupati, India

Statistics

Experience

Global Experience

Expertise

Qualifications

Profile

Frequently asked questions

Have questions? Find more information here.

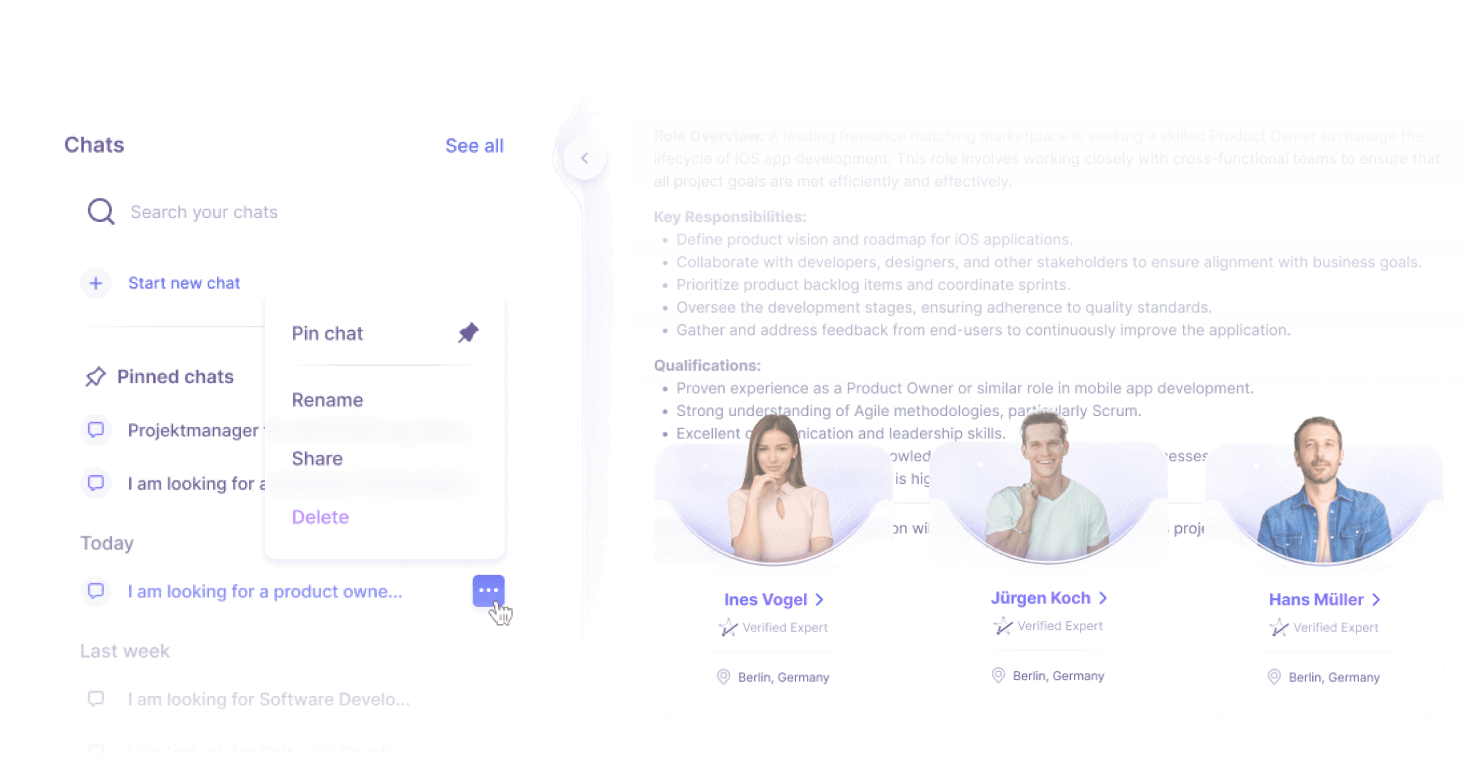

Similar Freelancers

Discover other experts with similar qualifications and experience

Experts recently working on similar projects

Freelancers with hands-on experience in comparable project as a Data Engineer